Good morning, good afternoon, good evening (I like to cover every possibility because who knows when you are reading this)! I hope you all enjoyed part one of this series where we talked about the simple process every eTMF system has inside it. In this blog let’s jump into something that is uniquely electronic and only possible because of the move to eTMF - Metrics!

I know what you are thinking, why do you need an eTMF for this? And ok, you are right - technically. But no one wants to be that person who’s technically right. You could manually take all those pieces of paper, count them up, log them all on Excel, and continue to log every time someone sends in a document, the date they sent it, the date on the document etc. You could even create plastic wallets for every expected piece of paper and count how many empty wallets you have versus full wallets. You could do a lot of things, but let’s be honest, you wouldn’t - would you?

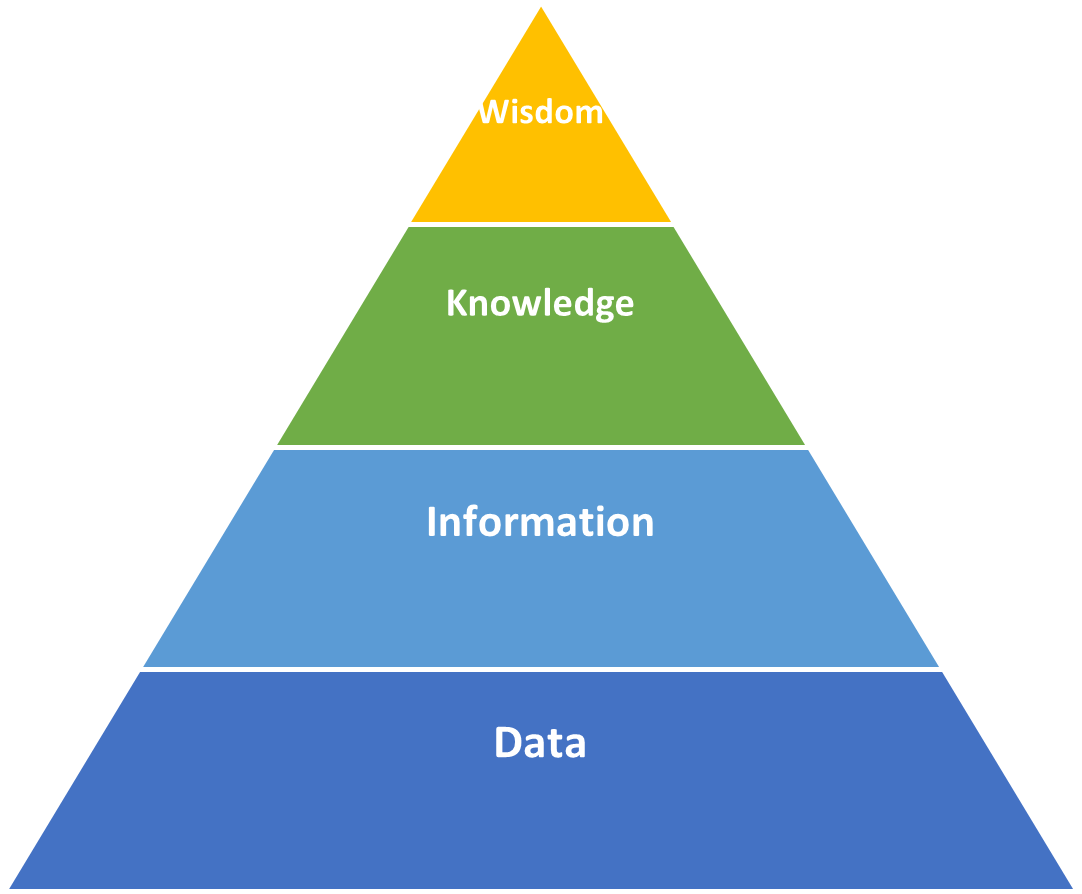

When we think about metrics we use words like data, analytics, reports, insights etc. What I would like to do first is to set the foundations for how to think of these things. Stay with me because it will help, I promise. Let’s start by defining some key ideas.

Data: All that stuff we record - the date a document is uploaded, the document date, when a document fails QC, or just simply what is there and what isn’t - is data. In your system you will have hundreds and thousands of data points. Alone this is just noise and what we need to do is structure this data in groups that will be helpful for us. This is what we would call…

Information: This is a collection of data in specific groups that tell us something. It could be a count of things, for example types of QC issues we have had over the last month; or maybe it’s more a this versus that, which shows what we have right now in the system and what we are missing. Structuring our data gives us information we can actually use and helps turn down the noise. Eventually when you get lots of information you can turn this into…

Knowledge: That information we can now put in a nice structured graph shows us things; for example, we see a real time lag between when documents are available versus when they are actually submitted to the TMF, and so we can confidently say we have a problem. This knowledge allows us start to putting corrective actions in place and means we are not just shooting in the dark trying to work out where we should focus our time. If only one of the three things we track is in trouble, why even worry about the other two? If it ain’t broke, don’t fix it.

Wisdom: You start to transcend time and space - you SEE the matrix in front of you. Or perhaps more importantly you start to see trends appearing; maybe it’s a particular functional group that is struggling with their documents, or a site that falls behind with their submissions. Once you get to this point you can start using your newfound wisdom to be proactive and anticipate problems before they even occur. Some say that people who reach this point actually achieve nirvana and a true sense of inner peace.

So why did I start off the blog with this? Well, I want you to keep this in mind while we talk about the next two things: Defining Metrics and Actionable Insights. We often jump a little too far forwards with these things and forget the WHY. Keeping in mind this build-up will be key to attaining success.

Let’s get into the real meaty sections now though.

Defining Metrics

I would strongly suggest that you crawl, walk then run here. If this is the first time you are capturing data, you don’t want to make it more complex than it needs to be. Start simple and capture things that will provide immediate benefit. Luckily there are three categories already defined and followed by almost everyone in the industry to help you out.

- Timeliness – This is the time it takes to get content into the system. We want to make sure that content is submitted to the TMF as soon as possible after it becomes available. Efficient submissions allow for effective decisions. Timeliness helps us track this and identify areas where we may be seeing a delay.

- Quality – In an ideal world every piece of content that enters the TMF would be perfect; but unfortunately, the world is not perfect. Quality is measuring how much of the content entering the TMF goes through the process as defined in blog one and reaches the other end without issue. Anything that fails a check should be flagged and recorded. Measuring how many pass first time, versus how many fail, gives us a great insight into the quality of content being submitted to the TMF.

- Completeness – When you create a study in an eTMF you would normally define what is going to be required: will you need specific plans, how many monitoring visit reports will you have etc. Completeness looks at that overall number of expected documents and simply compares that to what you actually have in the system. If you are at the start of a study being 5% complete may be completely normal and nothing you will worry about, but a day before you are archiving the study, well 5% will probably send you into heart palpitations!

There is some nuance to all of these and the exact data you collect and information you produce will change per system: what time stamps will you use for timeliness, will you base completeness on (milestones or just overall) etc? However, if you monitor nothing but these three you will be moving in the right direction.

Monitoring the numbers and identifying potential risks or issues or just the tip of the iceberg though, and it’s here that most people will start to fall over. They collect all manner of data and put it into pretty graphs and charts using fun colours and keys, but they do nothing with it. Nothing is actioned and if that’s the case, well why even bother?

Actionable Insights

Although this is at the end of the blog it should actually be one of the things you think about first. What is the point of collecting this data? What will we do with this information? Will the knowledge gained actually change the way we do things?

Ask yourself ‘What does this tell me?’, ‘Can we do anything about it?’ and ‘Who cares about it?’. There are a lot of things you could start recording and looking at, but unless they are valuable why go to the effort. Do we really care that Wednesday afternoons are the busiest times for uploading documents? Or how about that completeness was at 5% Monday, 5% Tuesday, 7% Wednesday, then back to 5% Thursday because someone added a new site? The answer is ‘no, you don’t care’, trust me on this. Even with that knowledge, are you going to do anything with it, or can you do anything with it? It’s not like you can create more Wednesdays to get the most out of people.

The other danger I see a lot is that people gain all this knowledge and then do nothing with it. They run a report each month on Quality and see it slowly getting worse, but when asked what the plan is they respond with ‘let’s see what happens next month’. Basically, we are leaving it up to fate to decide; if it gets worse then you have basically watched your TMF crumble more and more for a month, and if it gets better, amazing, but why did it get better and why did it become a problem in the first place?

Ideally, what you want to do is clearly define what you will measure, then define the criteria for what ‘good’ actually looks like - personally I am a big fan of the good old Red Amber Green (RAG) status. Finally, make sure you have a plan for what to do when things fall out of green; what initial steps will you take to identify the root cause? Sometimes that’s enough - you look and realise the reason things took a while to get into the TMF last month is because it was Christmas and everyone was enjoying themselves, there is no need to panic! The real danger is if you sit and do nothing. When the bogeyman (inspector, for those who didn’t read the first series of blogs) comes a-knocking and asks how you maintain oversight of the TMF and what you do to make sure you are compliant with your own set criteria, you can’t answer. All you can do is show the pretty coloured graph you created - maybe if you print it off in Black and White they won’t notice?

Summary

Hopefully this has provided some insight into the world of metrics and reporting. It can be pretty fun, and I know for a fact a lot of you reading this are data nerds, so chipping away at why that report changed from Green to Amber this month, or investigating the mystery of the phantom problem can really provide some excitement. Its ok to admit it, you are among friends here.

Thank you as always for taking the time to give the blog a read through. If you want to chat through anything I have spoken about here, reach out any time to us at Phlexglobal. We would be more than happy to jump on a call and nerd out about the TMF. Next week I will be taking a break and handing over my quill to Jacki Petty, who will be discussing what people actually do when managing a trial in an eTMF. Spoiler alert, its more than you think. Until then…

Take Care

Rob Jones

If you missed the Clinical Trials Series Launch you can now watch it on demand here. And don't forget to sign up for series close Ask An Expert session - you can register here.